By: John P. Conley on Feb 12, 2019

Table of Contents

1. Introduction

Quantity has its own quality. The telegraph was invented in the 1840’s, the telephone in 1876, and the Internet (through TCP/IC) in 1990. Each of these communications technologies was transformative in its own way. Of course, the number of telegrams, phone calls, and internet connections increased as costs came down. As costs continued to fall, however, qualitatively different uses for these technologies became feasible.

For example, the Internet was mainly confined to delivering emails and transferring text files between research universities in the beginning. As it became cheaper, it became practical to deliver web pages, graphics, audio and video content, and interactive games. Today, it is no longer necessary to buy physical copies of books, records, or movies. We don’t even need to store our personal files locally but can instead trust them to a cloud service. We can access any kind of information we want, from maps with real-time traffic conditions and reviews of the restaurant we happen to be walking by, to our bank balances and medical records. We can maintain constant contact with our friends and family. We can buy, sell, work, and even employ without ever leaving our homes. In other words, the cost of communications has gotten low enough that it has qualitatively changed our economy, our society, and created entirely new technologies and services that would have been difficult to even imagine before the era of ubiquitous connectivity.

Blockchain is at a similarly early point in its development. The two major public blockchains, Bitcoin and Ethereum, have a combined capacity of about ten to twelve transactions per second which implies something on the order of 350 million transactions can be processed per year. Costs range from a few cents to a few dollars per transaction.1 By way of comparison, PayPal processes approximately 10 billion transactions per year and the Visa network does about 100 billion. Of course transaction costs are also relatively high on these networks (on the scale of one to three percent of the transaction value) and neither use distributed ledger technology.

In this paper, we describe a vision for ubiquitous blockchain. We show how the technological approach used by The Geeq Project generates a scalability and cost structure that make use cases feasible that would otherwise be impossible. In turn, this enables qualitatively different ways to utilize information and interact as a society.

2. Physical Resource Requirements of GeeqChain

Readers may wish to skip the next two sections which detail how we calculate resource and economic costs of instances of GeeqChains and individual transactions. Readers interested primary is use cases are invited to skip to Sections 7 and 8.

The Ethereum network writes blocks about every ten seconds and processes around eight transactions per second (tps) for a total of approximately 250M transaction per year. The Bitcoin network writes blocks about every ten minutes and runs at around three tps for a total of approximately 100M transaction per year. GeeqChains can be configured to have different block writing intervals and to run at different speeds. Table 1 shows the number of transactions that an instance of GeeqChain can process per year depending on the speed that is chosen.

Table 1. Transactions per year at different speeds

Ethereum transactions are about .5kB on average although there is no fixed size. Ethereum transactions are often more complicated than simple token exchanges (interacting with smart contracts or placing documents in the blockchain, for example). Bitcoin transactions seem to be about 6kB on average but may include several individual payments as well as UTXOs. For the purposes of this paper, we will generally assume that a single GeeqChain transaction is .5kB on average. 2

There are 32,000 or so nodes on the Ethereum network and about 10,000 on the Bitcoin network. Tendermint, Delegated Proof of Stake, Practical Byzantine Fault Tolerance and other PoS consensus mechanisms generally have 100 or fewer validating nodes, although they are often reinforced or staked by a larger number of users or other network participants. Hyperledger Fabric can accommodate several approaches to consensus, but often uses PoS or Proof of Authority networks with 25 or fewer nodes. We will consider a single instance of a GeeqChain using Proof of Honesty on networks with between 10 and 100 nodes in the sections below.

The three major resources needed by blockchain validation networks are bandwidth, storage, and computation.

We use the following notation to estimate how much of each resource GeeqChains require and what this implies for the costs of these networks.

S Size of a transaction in kBs

N Number of nodes in the validation network

B Block size in terms of user transactions each

T Total number of user transactions in a given blockchain

tps Transactions per second

2.1 Bandwidth

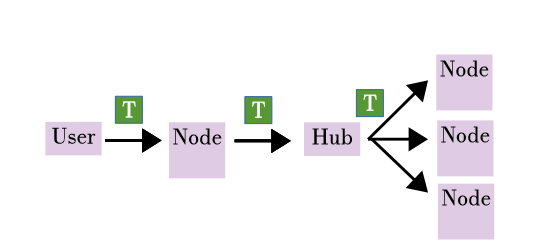

The key thing to notice about this is that the bottleneck is hub upload speed. A hub uses roughly N times more bandwidth uploading transactions to each node than it uses in download or in either upload or download when serving as an ordinary node. The maximum speed of the validation network in tps can therefore be determined by the quality of the hub’s upload broadband connection. The average upload speed for US residential broadband customers varies widely depending on region and carrier from around 5Mb/s to 140Mb/s.3 In 2017 upload rates in the US averaged 22.79Mb/s.4 . Recalling that we must divide this by eight and multiply by 1000 to get kB/s, we find that:

(N-1)(S/N+S+S/N) + S + SN = (N-1)S + (N-1)2S/N + S + SN ≈ (N—1+ 2 +1+ N)S = 2S(N+1)

Dividing this total bandwidth by N gives the average bandwidth used by a node/hub per transaction. Multiplying by 31.5M seconds per year gives the annual bandwidth consumed by the entire networks at various tps rates.

Table 2. Bandwidth requirements assuming S = 0.5kB

2.2 Storage

Each user transaction generates an additional fee transaction that puts payments for the validators into a system account. In addition, each time a block is written, a fee transaction out of the system account to each node is created. Finally, each block uses additional data for block headers, Active Node List (ANL) transactions, and sometimes, audit transactions. The first is a fixed amount per block and the last two are variable. To estimate the total storage needed, we consider these per transaction and per block costs separately.

The validity of each user transaction is determined by each node independently and generates both a user and fee transaction to be written the next block. This means 2SkB is written into the block and stored on N nodes forever (at least in principle).

Block headers include hashes of the Current Ledger States (CLSs), signatures, and various metadata. Let’s estimate this requires 10SkB. Audit transaction, ANL transactions, and some other transactions are probabilistic and variable. Let’s estimate these at 10SkB per block. Finally, each node writes a fee payment to itself and to all the other nodes out of the system account for each block (independent of the number of transactions in the block). Thus, NSkB is required to write one fee payment transaction for each node in the network for each non-empty block. In total, (20+N)SkB is the fixed storage requirement of each block regardless of the number of transactions it contains. Note, however, that this data is created locally, and so is not transmitted on the network. Thus, suppose a blockchain contained a total of T transactions and each block contained B user transactions on average. Note that if blocks are written every ten seconds, then B = 10×tps which implies that a chain with T transactions has T/B= T/10×tps total blocks. Given this, the storage requirement a chain containing T transactions is:

2TS+(20+N)TS/B = Total size of blockchain in kB

and so,

2S + (20+N)S/10*tps + Average kB per transaction

Table 3: Storage requirements per transactions with S = .5kB and 10 second blocks

To find the annual storage requirements over the entire network we multiply the number of transactions per year, the average storage required for a transaction, and the number of nodes in the network. Thus, a 100 node network running at 2tps requires a total of 63M × 4kB × 100 = 25.3TB.

Table 4: Total storage requirements with S = .5kB and 10 second blocks

2.3 Compute

Computational costs should be very small, but are difficult to estimate. For each block created, a node must do the following:

- Check stand alone validity: Check the balance and signature of each transaction against its own, locally maintained CLS. This may be done as a sanity check when the transaction arrives from the user or when it is sent in a bundle from the hub if the node is not the originator of the transaction.

- Create and sign a bundle of transactions to send to the hub.

- Receive a bundle of transactions from the hub

- Check honesty: Verify that the hash of the CLS each of the other nodes included in the bundle they sent to the hub agrees with its own local hash. If not, initiate an audit.

- Check joint validity of all transactions in the block: Check to see if any spends are coming from the same account. If so, check that they are not identical (duplicative) or that they add up to more than the account contains.

- Create fee transactions.

- Gather all valid user and fee transactions into a block, write the header, and commit it to the chain.

- Update the ledger state using the valid transactions in the block.

- The hub must also send each transaction to each of the N nodes in the network.

All in all, this is a fairly trivial amount of computational effort. Each transaction requires a search against the ledger, a signature and balance check, the creation of one or two fee transactions, and the updating balances in two or three accounts on the ledger. Joint validity is easy to check, and while audits might take non-trivial computational effort to complete, they should be very rare. Finally, some additional compute cycles are used in ordinary message creation and network communications. Besides possible audits, this should be no more than a background process that could run on home computers and even mobile devices.

From a resource standpoint, the compute required by GeeqChains are nominal. However, there may be instances of GeeqChain that run complicated applications or smart contracts. One of the purposes of Geeq is to provide trustless virtual machine services to users. Compute could become a non-trivial requirement on such chains. It may even be that nodes will have to employ more powerful computers to join such networks or risk being removed from the ANL for being non-responsive, out of sync, or incorrect in their execution of smart contracts or applications. This does not, however, affect the underlying resource requirements of the validation layer, which remain very small.

3. Cost Estimates

The calculations above estimated the physical resource requirements of a Geeq network. In this section, we translate these into costs.

Connectivity costs vary widely. Cloud providers offer bandwidth at prices between $.02 and $.08 per GB. A price of $80 per month for either 1TB or unlimited data is a typical broadband price for US households. This could be construed as an average cost of $.08 (or less) per GB. If a node is does not exceed its maximum allocation (including services provided to Geeq), then the opportunity cost is actually zero per GB. For the calculations below we will take $.05 per GB as the price of broadband.

Storage costs also vary widely. HDDs cost around $.03 per GB and might use $.005 in electricity per year. Assuming a five year lifespan, this a cost of $.05 per GB for five years of storage. Storage cost on cloud services vary widely and often include connectivity and compute as part of a package. Modest amounts of cloud storage can be had for free in many cases. Larger amounts of enterprise level cloud storage can be had for prices ranging up to $.05 to $.50 per GB per year. Obviously, these costs will fall in the future.5 We assume cloud storage costs $.20 per GB per year, or $1 per GB for five years.

Compute could be provided locally at a marginal cost of zero. Alternatively, we can consider it as using up a fraction of a laptop or desktop with a lifespan of five years. If running Geeq used 25% of the cycles of a device costing $1000, this would come out to $50 per year. Hosting an instance of a GeeqChain node on Ipage or Hostgator would probably cost on the order of $30 to $100. We will take compute costs as $100 per year.

Table 5: Total cost of bandwidth per year

Table 5 calculates the total bandwidth cost of running an instance of GeeqChain for an entire year with various network sizes and tps rates. Bandwidth costs turn out to be a relatively insignificant component of total costs. For example, a single node on a 100 node network running at 10tps would pay at most $16 per year for the required bandwidth. Note that 10tps is about the speed of the entire Ethereum and Bitcoin networks combined.

Table 6: Total cost of storage per year

Table 6 calculates the total storage cost of running an instance of GeeqChain for an entire year with various network sizes and tps rates (and assuming all transactions are stored for five years). Storage costs turn out to be the most significant component of total costs. Note that for large network sizes with high tps rates, each user transaction requires storing approximately 2SkB of data. For example, if each user transaction is .5kB, then approximately 1kB needs to be stored on each node of the network for each user transaction. This has several important implications:

GeeqChain is an approach to distributed ledger technology, which in turn, is a special case of a distributed data system. As such, each node keeps a full copy of all the data. In other words, one of the services that GeeqChain provides is redundant, distributed, data backup. By its nature, this is resource intensive, and cost is directly proportional to the size of the network (the number of nodes).

The cost of distributed data storage can vary widely in quality and cost. As an example, if data only needs to be stored on each node’s local hard drive for a year, costs might be as low as $.01 per GB (or even zero if a node has surplus capacity). If AWS or a similar cloud service is used, cost might be twenty times as much per year or more.

3.1 Different use cases are likely to call for very different sorts for storage and availability.

For example, a telemetry chain in which data from IoT devices (or hashes of data) is kept does not have a meaningful ledger state in many cases. The transactions in the chain are what are important, not some summary of these in the ledger. On the other hand, most of these transactions will never be looked at. Only in the case that the device owner needs to prove something about what the device sensed or did will the transaction become useful. For a micropayments chain, in contrast, the ledger is the only thing that matters. The blocks in the chain must support the ledger state, but what matters are the balances in the accounts. Other applications may fall somewhere in the middle.

Both the cost and the sheer volume of the data that GeeqChains might accumulate suggest that some additional architectural decisions should be made. A network running at 500tps accumulates 16TB of data year. Each node would need to be paid $16k just to break even. Clearly 16TB is more than one would want to keep on a local HDD. It also creates a significant barrier to entry to new nodes. Downloading 16TB alone would cost $800 at $.05 per GB.

3.2 Strategies might include:

- Limiting the tps on instances: At 10tps, approximately 300GB of data per node per year is accumulated. While this is a lot, it is a manageable amount for a home computer. It would require using no more than 20% of a node’s residential broadband capacity. Downloading a year’s worth of blocks to become a node would cost $15, so the barrier to entry would not be terribly high. Each node would only need to be paid $410 per year to break even, so joining GeeqChain as a node becomes a smaller decision.

- Creating light nodes as well as full nodes: Having 100 nodes in a validation network where nodes can come and go as they please, voluntarily and anonymously, provides increased confidence in the security of the chain. Keeping 100 copies of the entire blockchain, however, may not be necessary or worth the cost.

- Using checkpoints: When the ledger state and not the data in the chain itself is the thing of value, maintaining the full chain may not be necessary. As long as the check-pointing system allows users to verify the honesty of the ledger, the data in the chain can be truncated and discarded periodically.

- Giving instances of GeeqChain a finite lifetime: An instance of GeeqChain might be limited to 20tps, 25 nodes and also have a limit built into the genesis block that allows the application layer to run only for one year, and the entire chain for one additional month. This would mean that each node would have approximately 630GB in storage by the time the chain reached the end of its lifespan. Nodes would keep the data for an additional month to allow anyone who was interested to download and store it, and to allow the users and nodes to move the GeeqCoins they kept on the validation layer to another instance of GeeqChain.

3.3 The Advantages of GeeqChain : Federated Public Blockchains

Running Geeq’s validation layer code is computationally light. The opportunity costs may, in fact, be zero for nodes. The estimate below imposes a “lumpiness” to these costs that may not be present in real implementations. However, the effect of this assumption is to bias per transactions costs upwards for networks with smaller tps.

Table 7: Total cost of Compute per year

Table 7 estimates the total compute costs of running an instance of GeeqChain for an entire year with various network sizes and tps rates. Note, however, that compute costs scale linearly with the number of nodes on the network.

Table 8: Total cost of the network per year

Table 8 adds up Tables 5, 6, and 7, to find the total annual cost of various network configurations.

Table 9: Average cost of one transaction with 10 second blocks

Table 9 summarizes the cost per transaction on various types of GeeqChains.

4. Conclusions : GeeqChain Low Costs Open Markets for IoT and Micropayments on Blockchains

Transactions cost between six one-hundredths of a cent and one one-thousandths of a cent each, depending on the network. GeeqChain’s architecture makes it practical to consider using blockchain in applications such as recording IoT telemetry and micropayments where the value of each transaction is small, but the number of transactions is large.

Costs are more or less linear in the number of nodes used on the network.

The major portion of the cost is storage. If a cheaper solution is found than AWS at $1 per GB, costs could be reduced by an order of magnitude or more.

Networks with smaller tps are less efficient, but all networks approach a cost of one tenthousandths of a cent per transaction per node on the network.

Table 10: Total service cost per year

Table 10 gives a sense of what various levels of service would cost on GeeqChain. This table is designed with IoT telemetry primarily in mind. For example, a fire or burglar alarm that recorded its state of readiness once per day could do so at a cost ranging between .4 cents and 20 cents per

year. Devices that only report to the chain when an event occurs would pay costs proportionally depending of the frequency of the events. Hourly reports cost between 50 cents and 28 dollars per year. This frequency might be useful for industrial machinery controls that report volume or the

numbers of things used or produced. Minutely or secondly reports cost between 5 and 17k dollars per year. Infrastructure devices reporting electricity flow through a substation, water through a main, the state of a communications network, and medical device telemetry might need this level of frequency.

As we conclude this estimate of costs, recall the advantages of Geeq’s architecture.

First, the calculations presented here are for a single instance of GeeqChain. A single instance of GeeqChain, running at a modest 20tps, processes more transactions than Bitcoin and Ethereum combined.

Second, Geeq’s architecture permits unlimited scalability : as volume of transactions demanded increases, new instances of GeeqChains may be added, without competition for validation resources.

Finally, the architectural advantage of Geeq’s completely secure ecosystem for federated blockchains include interoperability between blockchains. Thus, we envision thousands of instances of processing transactions at once and sharing data and tokens as needed.

Notes

- There may be as many as 25,000 instances of Hyperledger deployed, but it is hard to find how many total trans-actions they execute. We would argue that private blockchain is an oxymoron or at least an exercise in futility (sort of like “write-only memory”), but we will save that discussion for a different day.

- Note that a .5kB transaction includes all the metadata, signatures, and overhead required, as well as around .1kB for an arbitrary data payload. If a larger payload is needed in a single transaction, no additional overhead is required. This implies that a transaction with a payload of .6kB would result in a total transaction size of 1kB and would cost a bit less than twice what a .5kB transaction with a .1kB payload would.

- https://www.pcmag.com/article/361765/the-fastest-isps-of-2018

- https://www.recode.net/2017/9/7/16264430/fastest-broadband-speeds-ookla-city-internet-service-provider

- The cloud services market is very competitive and Kryder’s Law suggests that disk

drive density doubles every thirteen months.

To learn more about Geeq, follow us and join the conversation.

@GeeqOfficial